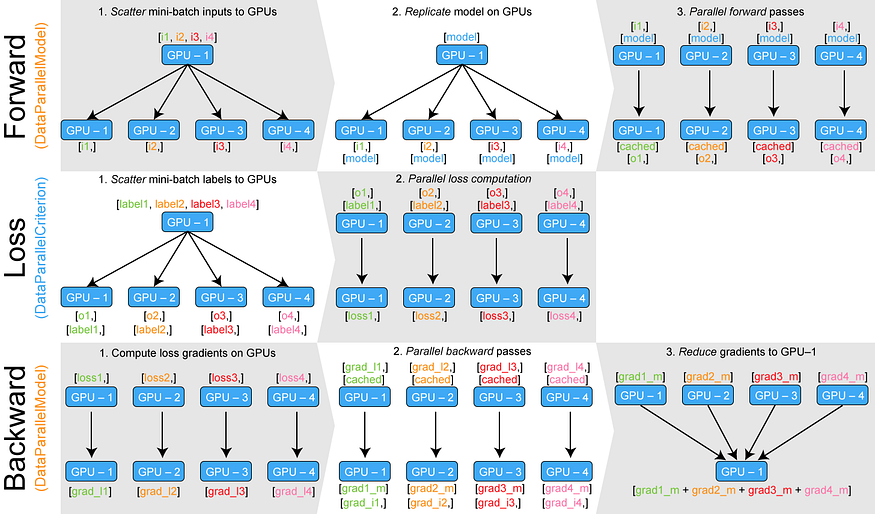

Training neural networks with larger batches in PyTorch: gradient accumulation, gradient checkpointing, multi-GPUs and distributed setups…

Reddit :: Tutorial – train your own llama.cpp mini-ggml-model from scratch!

Tutorial – train your own llama.cpp mini-ggml-model from scratch!

by u/Evening_Ad6637 in LocalLLaMA

Here I show how to train with llama.cpp your mini ggml model from scratch! these are currently very small models (20 mb when quantized) and I think this is more fore educational reasons (it helped me a lot to understand much more, when “create” an own model from.. nothing before. And it helps to understand the parameters and their effects much better)

Otherwise, these mini models could be good enough to be experts on very specific fields, like: only gives text in the style of someone. Like one model could speak like cartman from southpark, another could be a poem and you could implement these ‘person’ in your general chat or role play coversations as supporting roles or minor roles.. to make “group” chats, brainstormings, etc.

And: the discussions on github seems to be very promissing that we will soon be able to fine tune pre-trained big models like llama or vicuna and so on. espcially creating (q)lora adapters should be possible soon : )

this will be the next game changer i think (imagine your model could be finetuned in real time incrementally on top of its lora adapter and with your current conversation as the dataset – what awesome implications would this mean?)

EDIT:

You maybe need the training-script

— Tutorial – train your own llama.cpp mini-ggml-model from scratch!

Educational quiz platform Kahoot launches premium subscription service to make corporate training fun | VentureBeat

Educational quiz platform Kahoot launches premium subscription service to make corporate training fun | VentureBeat https://venturebeat.com/2017/10/03/educational-quiz-platform-kahoot-launches-premium-subscription-service-to-make-corporate-training-fun/l

Education Outrage: Pragmatic Learning: It’s not “fun”

Are games fun? This is an important question for people in training because not only animation but now “gamification” is a new trend. But are “games” fun? Winning is fun. Interacting with others with whom you are playing can be fun. Games can be entertaining and sometime they are fun, but when we think about making training more effective, we need to think less about having fun and more about what it means to learn.

Source: Education Outrage: Pragmatic Learning: It’s not “fun”

Why the community needs an open credential system | Opensource.com

Why the community needs an open credential system | Opensource.com http://opensource.com/business/15/11/3-reasons-open-badges-credentials?sc_cid=70160000000x3xCAAQ