In the world of data, textual data stands out as being particularly complex. It doesn’t fall into neat rows and columns like numerical data does. As a side project, I’m in the process of developing my own personal AI assistant. The objective is to use the data within my notes and documents to answer my questions. The important benefit is all data processing will occure locally on my computer, ensuring that no documents are uploaded to the cloud, and my documents will remain private.

Demystifying Text Data with the unstructured Python Library — https://saeedesmaili.com/demystifying-text-data-with-the-unstructured-python-library/

To handle such unstructured data, I’ve found the unstructured Python library to be extremely useful. It’s a flexible tool that works with various document formats, including Markdown, , XML, and HTML documents.

AI Reading List 7/6/2023

What I’m reading today.

- Researchers from Peking University Introduce ChatLaw: An Open-Source Legal Large Language Model with Integrated External Knowledge Bases — This includes links to the article and Github repo

- Why Embeddings Usually Outperform TF-IDF: Exploring the Power of NLP

- Fine-tune an LLM on your personal data: create a “The Lord of the Rings” storyteller

- Open Assistant — In the same way that Stable Diffusion helped the world make art and images in new ways, we want to improve the world by providing amazing conversational AI. Github repo

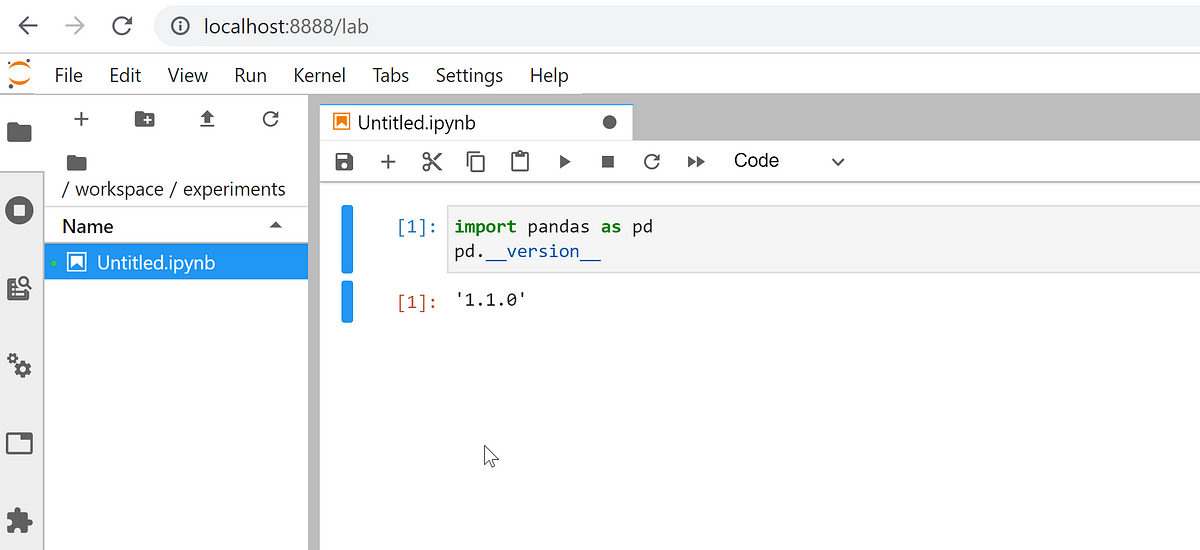

Configuring Jupyter Notebook in Windows Subsystem Linux (WSL2) | by Cristian Saavedra Desmoineaux | Towards Data Science

Here’s a great quick start guide to getting Jupyter Notebook and Lab up and running with the Miniconda environment in WSL2 running Ubuntu. When you’re finished walking through the steps you’ll have a great data science space up and running on your Windows machine.

I am going to explain how to configure Windows 10 and Miniconda to work with Notebooks using WSL2

AI Reading List 7/5/2023

The longer holiday weekend edition.

- Opportunities and Risks of LLMs for Scalable Deliberation with Polis — Polis is a platform that leverages machine intelligence to scale up deliberative processes. In this paper, we explore the opportunities and risks associated with applying Large Language Models (LLMs) towards challenges with facilitating, moderating and summarizing the results of Polis engagements.

- How I Use PandasAI to Complete 10 Most Frequent Tasks in Data Science —

A Quick Introduction and Development Guide For Pandas AI - Introduction to Haystack — Haystack is an open-source framework for building search systems that work intelligently over large document collections. Learn more about Haystack and how it works.

- Master Semantic Search at Scale: Index Millions of Documents with Lightning-Fast Inference Times using FAISS and Sentence Transformers — Dive into an end-to-end demo of a high-performance semantic search engine leveraging GPU acceleration, efficient indexing techniques, and robust sentence encoders on datasets up to 1M documents, achieving 50 ms inference times

- Natural Language to SQL using an Open Source LLM

- Leveraging LangChain, Pinecone, and LLMs for Document Question Answering: An Integrated Approach — Document Question Answering (DQA) is a crucial task in Natural Language Processing(NLP), aiming to develop automated systems capable of understanding and extracting relevant information from textual documents to answer user queries. With recent advancements in Large Language Models (LLMs) like ChatGPT and innovative tools and technologies such as LangChain and Pinecone, a new integrated approach to DQA has emerged.

- LlamaIndex: the ultimate LLM framework for indexing and retrieval — LlamaIndex, previously known as the GPT Index, is a remarkable data framework aimed at helping you build applications with LLMs by providing essential tools that facilitate data ingestion, structuring, retrieval, and integration with various application frameworks.