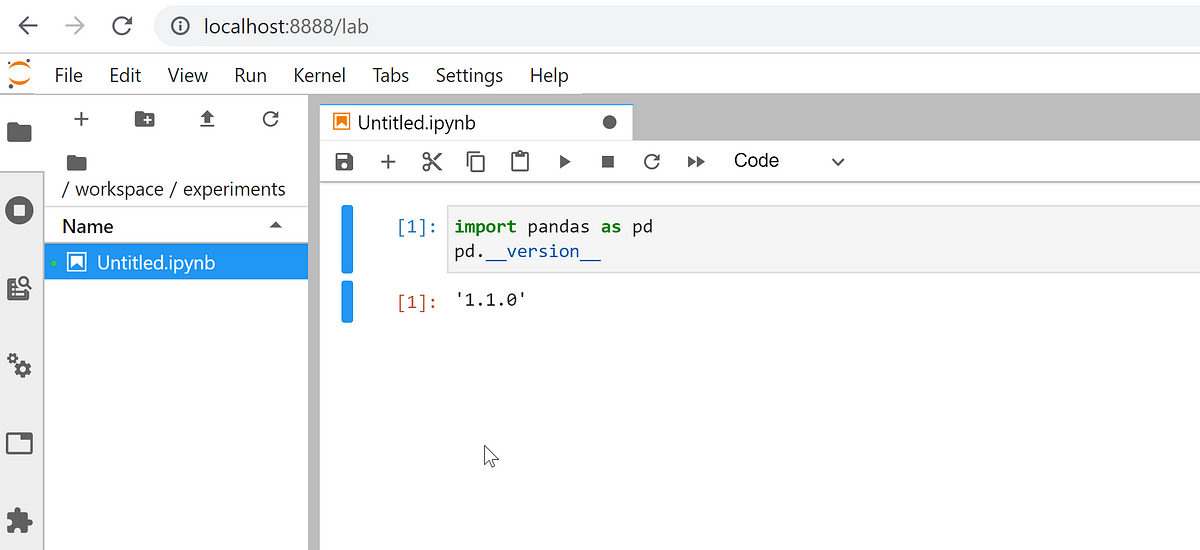

Mountpoint for Amazon S3 is an open source file client that makes it easy for your file-aware Linux applications to connect directly to Amazon Simple Storage Service (Amazon S3) buckets. Announced earlier this year as an alpha release, it is now generally available and ready for production use on your large-scale read-heavy applications: data lakes, machine learning training, image rendering, autonomous vehicle simulation, ETL, and more. It supports file-based workloads that perform sequential and random reads, sequential (append only) writes, and that don’t need full POSIX semantics.

— Mountpoint for Amazon S3 – Generally Available and Ready for Production Workloads